Why Groq

Groq is fast AI inference.

FAQts

What is Groq?

Groq is the AI infrastructure company that delivers fast AI inference.

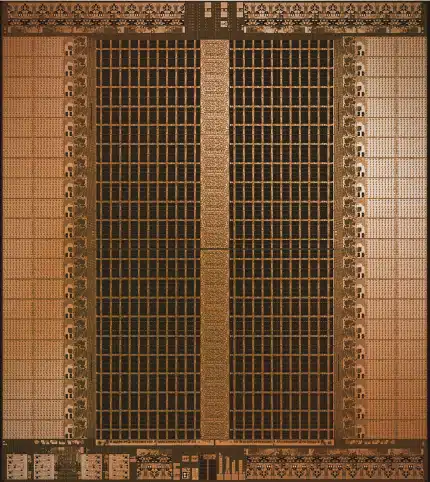

The LPU™ Inference Engine by Groq is a hardware and software platform that delivers exceptional compute speed, quality, and energy efficiency.

Groq, headquartered in Silicon Valley, provides cloud and on-prem solutions at scale for AI applications. The LPU and related systems are designed, fabricated, and assembled in North America.

What is the LPU™ Inference Engine?

An LPU Inference Engine, with LPU standing for Language Processing Unit™, is a hardware and software platform that delivers exceptional compute speed, quality, and energy efficiency. This new type of end-to-end processing unit system provides the fastest inference for computationally intensive applications with sequential components, such as AI language applications like Large Language Models (LLMs).

Why is it so much faster than GPUs for LLMs and GenAI?

The LPU is designed to overcome the two LLM bottlenecks: compute density and memory bandwidth. An LPU has greater compute capacity than a GPU and CPU in regards to LLMs. This reduces the amount of time per word calculated, allowing sequences of text to be generated much faster. Additionally, eliminating external memory bottlenecks enables the LPU Inference Engine to deliver orders of magnitude better performance on LLMs compared to GPUs.

For a more technical read about our architecture, download our ISCA-awarded 2020 and 2022 papers.

Does Groq run standard machine learning frameworks?

Groq supports standard machine learning (ML) frameworks such as PyTorch, TensorFlow, and ONNX for inference. Groq does not currently support ML training with the LPU Inference Engine.

For custom development, the GroqWare™ suite, including Groq Compiler, offers a push-button experience to get models up and running quickly. For optimizing workloads, we offer the ability to hand code to the Groq architecture and fine-grained control of any GroqChip™ processor, enabling customers the ability to develop custom applications and maximize their performance.

How do I get started using Groq?

We’re excited you want to get started with Groq. Here are some of the fastest ways to get up and running:

Developer access can be obtained completely self-serve through Playground on GroqCloud. There you can obtain your API key and access our documentation as well as our terms and conditions on Playground. Join our Discord community here.

If you are currently using OpenAI API, you just need three things to convert over to Groq:

- Groq API key

- Endpoint

- Model

Do you need the fastest inference at data center scale? We should chat if you need:

✓ 24/7 support

✓ SLAs

✓ A dedicated account representative

Let’s talk to ensure we can provide the right solution for your needs. Please fill out the drop down form here and tell us a little about your project. After submitting, a Groqster will be in touch with you shortly.

The Latest on Groq

Interested in covering Groq? Reach out to our PR team.

Groq: Rick Lamers, Omar Kilani, Barry Greengus, Mikhail Kandel, Derek Elkins, Dipan Padsumbia, Gavin Sherry, and Jonathan Ross; Glaive: Nishant Aklecha and Sahil Chaudhary

Game Changing Tech

GroqCloud™

GroqCloud is powered by a scaled network of Language Processing Units.

Leverage popular open-source LLMs like Meta AI’s Llama 2 70B, running up to 18x faster than other leading providers.

GroqRack™

The backbone of low latency, large-scale deployments.

- 42U rack with up to 64 interconnected chips

- End-to-end latency of only 1.6µs

- Near-linear multi-server and multi-rack scalability without the need for external switches

- 35kW max power consumption

Connect with Sales to learn about on-prem solutions

GroqNode™

Unprecedented low latency meets uncompromised scalability.

- 4U rack-ready scalable compute system featuring eight interconnected GroqCard™ accelerators

- 4kW max power consumption

GroqCard™

Ready, set, done. Guaranteed low latency.

- A single chip in a standard PCIe Gen 4×16 form factor providing hassle-free server integration

- 375W max power consumption. 240W average power consumption

Available through our partner Bittware.

Language Processing Unit

- Exceptional sequential performance

- Single core architecture

- Synchronous networking that is maintained in large-scale deployments

- Ability to auto-compile >50B LLMs

- Instant memory access

- High accuracy that is maintained even at lower precision level